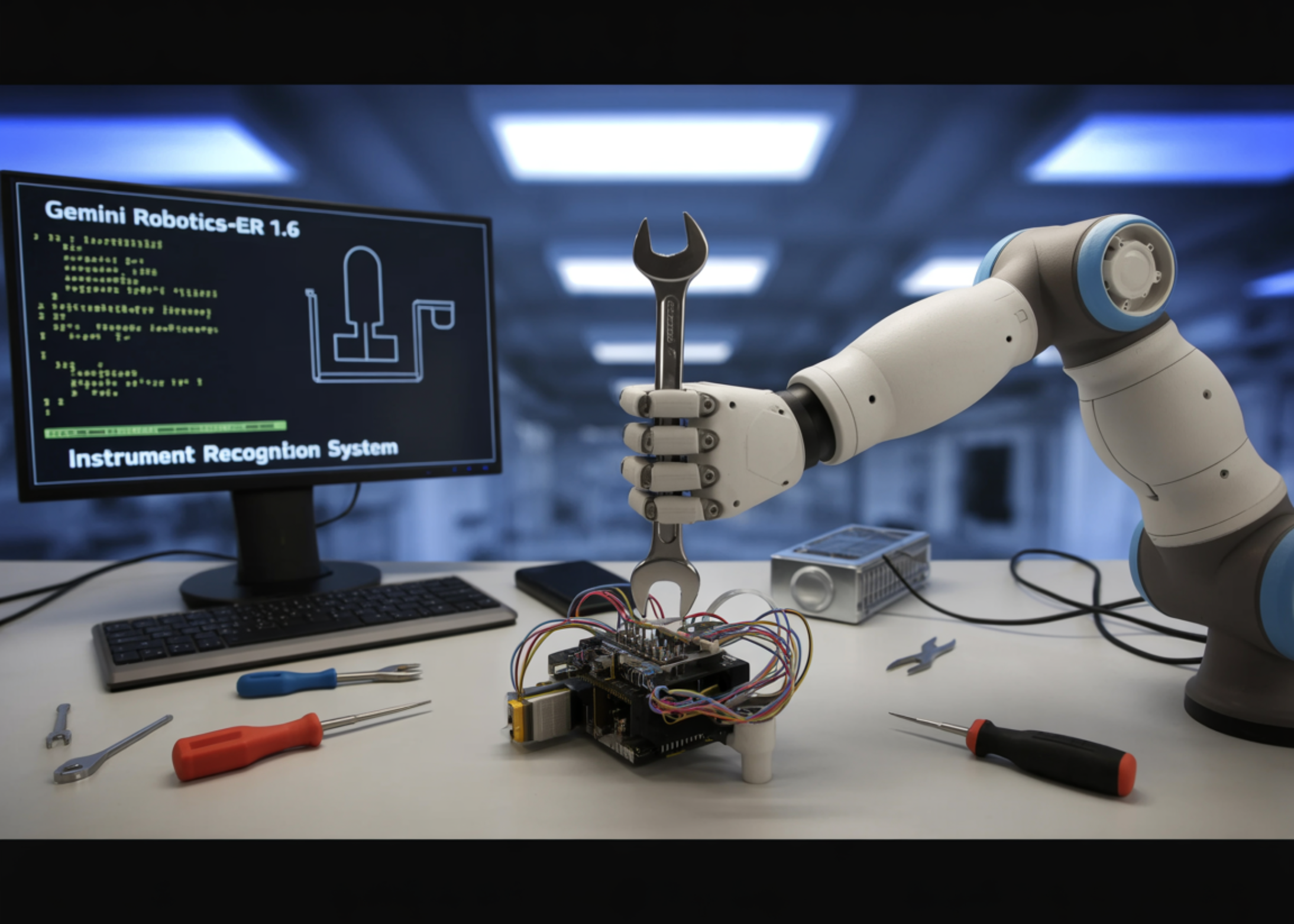

Google DeepMind Releases Gemini Robotics-ER 1.6: Bringing Enhanced Embodied Reasoning and Instrument Reading to Physical AI

Google DeepMind research team introduced Gemini Robotics-ER 1.6, a significant upgrade to its embodied reasoning model designed to serve as the ‘cognitive brain’ of robots operating in real-world environments. The model specializes in reasoning capabilities critical for robotics, including visual and spatial understanding, task planning, and success detection — acting as the high-level reasoning model for a robot, capable of executing tasks by natively calling tools like Google Search, vision-language-action models (VLAs), or any other third-party user-defined functions.

Here is the key architectural idea to understand: Google DeepMind takes a dual-model approach to robotics AI. Gemini Robotics 1.5 is the vision-language-action (VLA) model — it processes visual inputs and user prompts and directly translates them into physical motor commands. Gemini Robotics-ER, on the other hand, is the embodied reasoning model: it specializes in understanding physical spaces, planning, and making logical decisions, but does not directly control robotic limbs. Instead, it provides high-level insights to help the VLA model decide what to do next. Think of it as the difference between a strategist and an executor — Gemini Robotics-ER 1.6 is the strategist.

What’s New in Gemini Robotics-ER 1.6

Gemini Robotics-ER 1.6 shows significant improvement over both Gemini Robotics-ER 1.5 and Gemini 3.0 Flash, specifically enhancing spatial and physical reasoning capabilities such as pointing, counting, and success detection. But the key addition is a capability that did not exist in prior versions at all: instrument reading.

Pointing as a Foundation for Spatial Reasoning

Pointing — the model’s ability to identify precise pixel-level locations in an image — is far more powerful than it sounds. Points can be used to express spatial reasoning (precision object detection and counting), relational logic (making comparisons such as identifying the smallest item in a set, or defining from-to relationships like ‘move X to location Y’), motion reasoning (mapping trajectories and identifying optimal grasp points), and constraint compliance (reasoning through complex prompts like “point to every object small enough to fit inside the blue cup”).

In internal benchmarks, Gemini Robotics-ER 1.6 demonstrates a clear advantage over its predecessor. Gemini Robotics-ER 1.6 correctly identifies the number of hammers, scissors, paintbrushes, pliers, and garden tools in a scene, and does not point to requested items that are not present in the image — such as a wheelbarrow and Ryobi drill. In comparison, Gemini Robotics-ER 1.5 fails to identify the correct number of hammers or paintbrushes, misses scissors altogether, and hallucinates a wheelbarrow. For AI Robotics professionals this matters because hallucinated object detections in robotic pipelines can cause cascading downstream failures — a robot that ‘sees’ an object that isn’t there will attempt to interact with empty space.

Success Detection and Multi-View Reasoning

In robotics, knowing when a task is finished is just as important as knowing how to start it. Success detection serves as a critical decision-making engine that allows an agent to intelligently choose between retrying a failed attempt or progressing to the next stage of a plan.

This is a harder problem than it looks. Most modern robotics setups include multiple camera views such as an overhead and wrist-mounted feed. This means a system needs to understand how different viewpoints combine to form a coherent picture at each moment and across time. Gemini Robotics-ER 1.6 advances multi-view reasoning, enabling it to better fuse information from multiple camera streams, even in occluded or dynamically changing environments.

Instrument Reading: A Real-World Breakthrough

The genuinely new capability in Gemini Robotics-ER 1.6 is instrument reading — the ability to interpret analog gauges, pressure meters, sight glasses, and digital readouts in industrial settings. This task stems from facility inspection needs, a critical focus area for Boston Dynamics. Spot, a Boston Dynamics robot, is able to visit instruments throughout a facility and capture images of them for Gemini Robotics-ER 1.6 to interpret.

Instrument reading requires complex visual reasoning: one must precisely perceive a variety of inputs — including the needles, liquid level, container boundaries, tick marks, and more — and understand how they all relate to each other. In the case of sight glasses, this involves estimating how much liquid fills the sightglass while accounting for distortion from the camera perspective. Gauges typically have text describing the unit, which must be read and interpreted, and some have multiple needles referring to different decimal places that need to be combined.

Gemini Robotics-ER 1.6 achieves its instrument readings by using agentic vision (a capability that combines visual reasoning with code execution, introduced with Gemini 3.0 Flash and extended in Gemini Robotics-ER 1.6). The model takes intermediate steps: first zooming into an image to get a better read of small details in a gauge, then using pointing and code execution to estimate proportions and intervals, and ultimately applying world knowledge to interpret meaning.

Gemini Robotics-ER 1.5 achieves a 23% success rate on instrument reading, Gemini 3.0 Flash reaches 67%, Gemini Robotics-ER 1.6 reaches 86%, and Gemini Robotics-ER 1.6 with agentic vision hits 93%. One important caveat: Gemini Robotics-ER 1.5 was evaluated without agentic vision because it does not support that capability. The other three models were evaluated with agentic vision enabled for the instrument reading task, making the 23% baseline less a performance gap and more a fundamental architectural difference. For AI developers evaluating model generations, this distinction matters — you are not comparing apples to apples across the full benchmark column.

Key Takeaways

- Gemini Robotics-ER 1.6 is a reasoning model, not an action model: It acts as the high-level ‘brain’ of a robot — handling spatial understanding, task planning, and success detection — while the separate VLA model (Gemini Robotics 1.5) handles the actual physical motor commands.

- Pointing is more powerful than it looks: Gemini Robotics-ER 1.6’s pointing capability goes far beyond simple object detection — it enables relational logic, motion trajectory mapping, grasp point identification, and constraint-based reasoning, all of which are foundational to reliable robotic manipulation.

- Instrument reading is the biggest new capability: Built in collaboration with Boston Dynamics’ Spot robot for industrial facility inspection, Gemini Robotics-ER 1.6 can now read analog gauges, pressure meters, and sight glasses with 93% accuracy using agentic vision — up from just 23% in Gemini Robotics-ER 1.5, which lacked the capability entirely.

- Success detection is what enables true autonomy: Knowing when a task is actually complete — across multiple camera views, in occluded or dynamic environments — is what allows a robot to decide whether to retry or move to the next step without human intervention.

Check out the Technical details and Model Information. Also, feel free to follow us on Twitter and don’t forget to join our 130k+ ML SubReddit and Subscribe to our Newsletter. Wait! are you on telegram? now you can join us on telegram as well.

Need to partner with us for promoting your GitHub Repo OR Hugging Face Page OR Product Release OR Webinar etc.? Connect with us